(Getty Images/Rob Dobi)

Throughout 2024, the ADL Center on Extremism documented the tactics deployed by extremists and purveyors of hate to promote false narratives, as well as the harmful impact of conspiracy theories, misinformation and disinformation on communities including Jews, immigrants and other marginalized groups.

Predicting how extremists may weaponize false narratives requires an understanding of the strategies that allow them to spread most effectively. Here, we highlight three key mis- and disinformation trends and tactics that saw success throughout 2024 and could deeply impact the extremist landscape in 2025 and beyond.

Using Generative Artificial Intelligence (GAI) to spread hate and propaganda

In recent years, the ADL Center on Extremism has highlighted how purveyors of conspiracy theories and hate use generative artificial intelligence (GAI) tools to promote disinformation, extremist rhetoric and harmful content. 2024 proved no different, but advances in this technology made these tools more pernicious as social media continued to serve as fertile ground for AI-generated hate.

Videos showing English-language Hitler speeches once again went viral in September 2024, following similar content that circulated widely on X (formerly Twitter) earlier in the year, and on fringe platforms in 2023. Other types of audio-based GAI content popularized in 2024 came from apps like Suno, which users exploited to create AI-generated songs promoting hate, conspiracy theories and violence.

Song lyrics praising mass shooter Brenton Tarrant —who is serving life in prison for the 2019 New Zealand killings at two mosques in Christchurch — as courageous, saying “he saved us all.” (Suno/Screenshot)

In April 2024, hateful GAI images migrated beyond the screen when the Michigan chapter of the white supremacist group White Lives Matter (WLM) rented roadside billboards in the metro Detroit area. These included white supremacist dog whistles alongside an image of Hitler that appeared to be AI-generated, making the billboard one of the earliest examples of GAI content in offline extremist propaganda tracked by ADL.

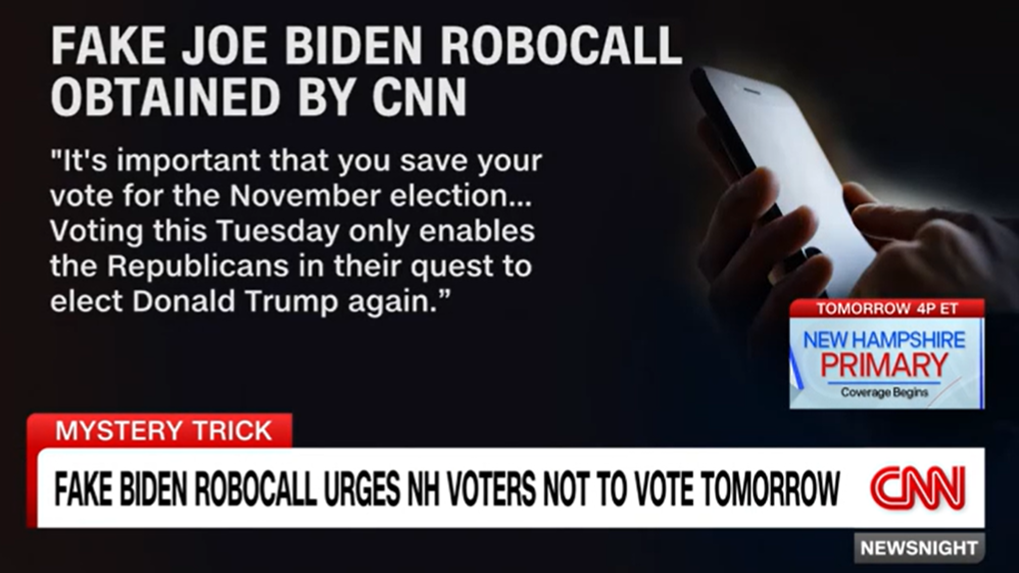

Ahead of the 2024 U.S. presidential election, promoters of disinformation used GAI content to influence voter sentiment, including synthetic speech robocalls and fabricated images. They also leveraged the “liar’s dividend" phenomenon to discredit factual information, suggesting that authentic images — such as photos of a crowd at a Kamala Harris rally in Detroit — were actually fabricated to deceive the masses.

An excerpt from a robocall using synthetic speech of Joe Biden's voice, aired on CNN. (Source: CNN)

While foreign influence campaigns are nothing new to the U.S., multiple disinformation operations out of Iran, Russia and China surfaced throughout 2024 — some of which used GAI to enhance their efforts. In September 2024, the Department of Justice cracked down on a series of disinformation campaigns known as “Doppelganger,” crafted by Russian propagandists to spread manipulative content online. Strategies included “cybersquatting,” or registering domains meant to impersonate real domains, where they hosted falsified news articles — some of which were reportedly written with the help of AI tools like ChatGPT.

A Chinese-backed influence campaign, referred to by analytics firm Graphika as “Spamouflage,” also used GAI content, including deepfake videos, to spread divisive messaging related to U.S. politics and social issues throughout 2024. On X, some posts connected to the campaign depicted former President Biden and President Donald Trump fighting as action movie-type rivals.

We already know that these tools are advancing at a rapid pace and that GAI-driven disinformation will only yield more convincing content over time. GAI tools may also be weaponized by people seeking to carry out acts of violence; this has already transpired in 2025, as evidenced by Matthew Livelsberger — the man responsible for the coordinated explosion of a Cybertruck outside a Las Vegas hotel on January 1, 2025 — who police say used ChatGPT to plan the deadly event.

Increased scapegoating of Israel and Zionists

Since Hamas’s deadly attack against Israel on October 7, 2023, mis- and disinformation narratives about Israel have fueled hate against Zionists and Jews at large. False claims attributing horrific world events to Israel have been commonplace for years among antisemitic figures and extremists, but this rhetoric has demonstrably worsened since the start of the Israel-Hamas war and throughout 2024.

On March 22, 2024, four gunmen affiliated with the Islamic State–Khorasan Province carried out a deadly terror attack at Crocus City Hall, a venue outside of Moscow. Although the Islamic State group claimed responsibility for the attack, extremists and conspiracy theorists alleged that terrorist groups like ISIS are a product of Israel.

Anti-Zionist influencer Anastasia Maria Loupis equates Israel to ISIS in a March 2024 post on X. (X/Screenshot)

On May 19, 2024, a helicopter carrying Iranian President Ebrahim Raisi and seven others crashed near Uzi, Iran, killing everyone on board. While investigators determined that the crash was caused by “challenging climatic and atmospheric conditions,” anti-Zionists on social media suggested the crash was coordinated by the CIA and Mossad as retaliation against Iran. Some suggested that Israel modified the weather using cloud seeding to target the helicopter.

On July 29, 2024, a teenage boy attacked a dance class in Southport, England, stabbing three young girls to death and injuring several others. In addition to false claims alleging that the suspect was a Muslim immigrant seeking asylum in the country — which ultimately sparked far-right riots across Great Britain and Ireland — antisemitic and anti-Zionist influencers claimed the riots were the result of an Israeli or Zionist plot to sow unrest.

On November 29, 2024, a coalition of rebel groups including Hayat Tahrir al-Sham (HTS) carried out attacks in Syria and seized the city of Aleppo, eventually leading to the fall of Bashar al-Assad’s regime. Anti-Zionist influencers quickly spread baseless narratives about the attacks, suggesting they were secretly and deliberately coordinated by Israel, or that Israel worked with the Biden administration to help HTS carry out their plan.

Anti-Zionist influencer “Syrian Girl” falsely suggests that Israel-backed groups were involved in the Aleppo attacks in November 2024. (X/Screenshot)

The immediate blame placed on Zionists, Israel and the U.S. government follows a familiar pattern among extremists and conspiracy theorists: Leveraging newsworthy events to promote hate. Israel will likely be blamed for many other domestic and geopolitical events due to the sentiments surrounding the Israel-Hamas war and increased antisemitism around the world. The first few weeks of 2025 have made this clear, as Israel and Zionists have already been blamed for the terror attack in New Orleans and the wildfires in Los Angeles.

Leveraging social media changes to promote mis- and disinformation

The ever-changing nature of social media — including amendments to moderation guidelines, shifts in ownership and the introduction of new platforms — creates ample opportunities for extremists and conspiracy theorists to promote harmful mis- and disinformation.

Throughout 2024, X continued to be a hotbed of hate and false narratives. In the six months following October 7, 2023, prominent far-right influencers received increased engagement on the platform, where they freely promoted conspiracy theories about Jews, Israel, Zionism and the Israel-Hamas war. Far-left influencers also took part in promoting these false narratives on X, including denying that victims of October 7 were sexually assaulted. Notably, in a 2024 report, the ADL Center on Technology and Society found that several mainstream social media platforms failed to act on reports of antisemitic hate speech and conspiracy theories. Of all the platforms reviewed, X scored the lowest.

Far-right influencer Jackson Hinkle suggests a photo showing the bloody aftermath of the October 7 attacks was fabricated in an October 2024 post on X. (X/Screenshot)

In 2024, Congress enacted the Protecting Americans from Foreign Adversary Controlled Applications Act requiring that the Chinese company, ByteDance, divest the app TikTok, or else be forced to shut down in the U.S. by January 19, 2025. Since then, extremists and conspiracy theorists across the political spectrum have suggested that Israel, Zionists or Jews at large were behind the proposed ban to censor anti-Israel voices on the platform.

Following the November 2024 election, users migrated to Bluesky with the hope that it would more effectively address misinformation, disinformation and hate speech than its competitors. Yet the increased attention on the platform led some to push the limits of those moderation efforts. For example, the Russian media outlet RT — known for promoting state-sponsored propaganda and disinformation — announced that they joined Bluesky, supposedly “to test how long it will take for the admins to ban us.” Lists describing Zionists as “scum” and “garbage” have also emerged on the platform, along with inauthentic bot networks and fraudulent accounts posing as legitimate organizations, including the ADL.

A moderation list deeming select users on the platform “Zionist scum.” (Bluesky/Screenshot)

In 2025, changes across the social media landscape already don’t bode well for efforts to address hate and false narratives online. Congress’ TikTok divesture law continues to fuel antisemitic, anti-Israel and anti-Zionist narratives in 2025, and has inspired some to migrate to the Chinese social media platform RedNote, also known as Xiaohongshu. While some extremists and purveyors of hate have since joined the platform, others expressed paranoia that RedNote is also controlled by Zionists or Jews — a narrative that stemmed from the discovery that one of RedNote's shareholders is DST Global, a venture capital firm founded by Israeli citizen Yuri Milner.

Meanwhile, on January 7, Meta announced plans to end its “third party fact-checking program” in favor of a model similar to X’s “Community Notes,” putting more vetting power in the hands of users, with less restrictions on content that falls outside severe platform violations or illegal activity.

Soon after these changes were announced, some white supremacist groups suggested leveraging the more lenient rules “to push for mass deportations and race-based immigration policies” on Meta platforms like Facebook and Instagram. On X, far-right conspiracy theorist Alex Jones claimed he would test out and “verify” if Meta platforms were truly turning into havens of free speech.